BankClassify: simple automatic classification of bank statement entries

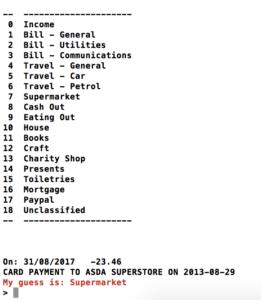

This is another entry in my ‘Previously Unpublicised Code’ series – explanations of code that has been sitting on my Github profile for ages, but has never been discussed publicly before. This time, I’m going to talk about BankClassify a tool for classifying transactions on bank statements into categories like Supermarket, Eating Out and Mortgage automatically. It is an interactive command-line application that looks like this:

For each entry in your bank statement, it will guess a category, and let you correct it if necessary – learning from your corrections.

I’ve been using this tool for a number of years now, as I never managed to find another tool that did quite what I wanted. I wanted to have an interactive classification process where the computer guessed a category for each transaction but you could correct it if it got it wrong. I also didn’t want to be restricted in what I could do with the data once I’d categorised it – I wanted a simple CSV output, so I could just analyse it using pandas. BankClassify meets all my needs.

If you want to use BankClassify as it is written at the moment then you’ll need to be banking with Santander – as it can only important text-format data files downloaded from Santander Online Banking at the moment. However, if you’ve got a bit of Python programming ability (quite likely if you’re reading this blog) then you can write another file import function, and use the rest of the module as-is. To get going, just look at the README in the repository.

So, how does this work? Well it uses a Naive Bayesian classifier – a very simple machine learning tool that is often used for spam filtering (see this excellent article by Paul Graham introducing its use for spam filtering). It simply splits text into tokens (more on this later) and uses training data to calculate probabilities that text containing each specific token belongs in each category. The term ‘naive’ is used because of various naive, and probably incorrect, assumptions which are made about independence between features, using a uniform prior distribution and so on.

Creating a Naive Bayesian classifier in Python is very easy, using the textblob package. There is a great tutorial on building a classifier using textblob here, but I’ll run quickly through my code anyway:

First we load all the previous data from the aptly-named AllData.csv file, and pass it to the _get_training function to get the training data from this file in a format acceptable to textblob. This is basically a list of tuples, each of which contains (text, classification). In our case, the text is the description of the transaction from the bank statement, and the classification is the category that we want to assign it to. For example ("CARD PAYMENT TO SHELL TOTHILL,2.04 GBP, RATE 1.00/GBP ON 29-08-2013", "Petrol"). We use the _extractor function to split the text into tokens and generate ‘features’ from these tokens. In our case this is simply a function that splits the text by either spaces or the '/' symbol, and creates a boolean feature with the value True for each token it sees.

Now we’ve got the classifier, we read in the new data (_read_santander_file) and the list of categories (_read_categories) and then get down to the classification (_ask_with_guess). The classification just calls the classifier.classify method, giving it the text to classify. We then do a bit of work to nicely display the list of categories (I use colorama to do nice fonts and colours in the terminal) and ask the user whether the guess is correct. If it is, then we just save the category to the output file – but if it isn’t we call the classifier.update function with the correct tuple of (text, classification), which will update the probabilities used within the classifier to take account of this new information.

That’s pretty-much it – all of the rest of the code is just plumbing that joins all of this together. This just shows how easy it is to produce a useful tool, using a simple machine learning technique.

Just as a brief aside, you can do interesting things with the classifier object, like ask it to tell you what the most informative features are:

Most Informative Features

IN = True Cheque : nan = 6.5 : 1.0

UNIVERSITY = True Cheque : nan = 6.5 : 1.0

PAYMENT = None Cheque : nan = 6.5 : 1.0

COWHERDS = True Eating : nan = 6.5 : 1.0

CARD = None Cheque : nan = 6.5 : 1.0

CHEQUE = True Cheque : nan = 6.5 : 1.0

TICKETOFFICESALE = True Travel : nan = 6.5 : 1.0

SOUTHAMPTON = True Cheque : nan = 6.5 : 1.0

CRAFT = True Craft : nan = 4.3 : 1.0

LTD = True Craft : nan = 4.3 : 1.0

HOBBY = True Craft : nan = 4.3 : 1.0

RATE = None Cheque : nan = 2.8 : 1.0

GBP = None Cheque : nan = 2.8 : 1.0

SAINSBURYS = True Superm : nan = 2.6 : 1.0

WAITROSE = True Superm : nan = 2.6 : 1.0

Here we can see that tokens like IN, UNIVERSITY, PAYMENT and SOUTHAMPTON are highly predictive of the category Cheque (as most of my cheque pay-ins are shown in my statement as PAID IN AT SOUTHAMPTON UNIVERSITY), and that CARD not existing as a feature is also highly predictive of the category being cheque (fairly obviously). Names of supermarkets also appear there as highly predictive for the Supermarket class and TICKETOFFICESALE for Travel (as that is what is displayed on my statement for a ticket purchase at my local railway station). You can even see some of my food preferences in there, with COWHERDS being highly predictive of the Eating Out category.

So, have a look at the code on Github, and have a play with it – let me know if you do anything cool.

If you found this post useful, please consider buying me a coffee.

This post originally appeared on Robin's Blog.

Categorised as: Previously Unpublicised Code, Programming, Python

Does it mean if I’m using this classifier for the first time I’ll have to manually label everything?

Hi Robin,

Found your approach truly inspirational.

I need this in my life!

One thing I was wondering if it would be possible to add splitting functionality?

I find too many of our purchases go trough amazon with multiple items.

As for the statement data.

Not sure if you’re familiar with plaid but you could open a dev account for free that would give you API access to majority of UK banks. Saving you a lot of time downloading csvs.